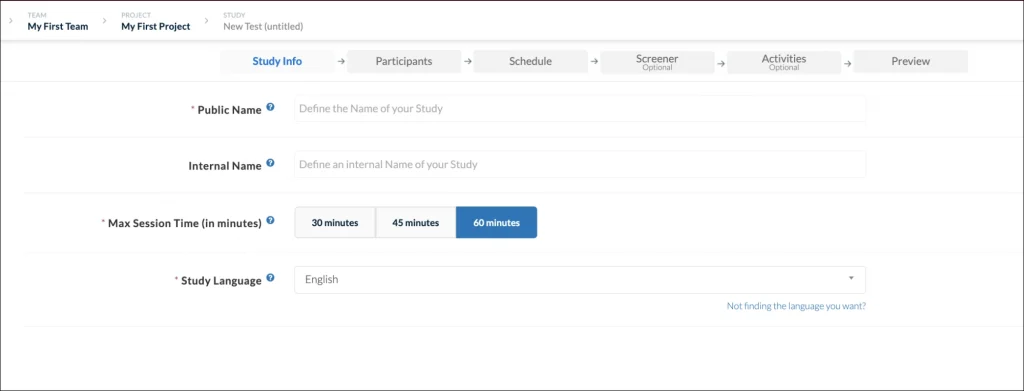

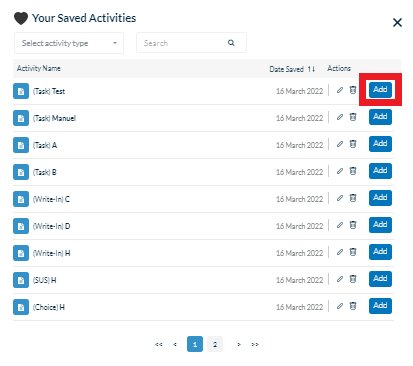

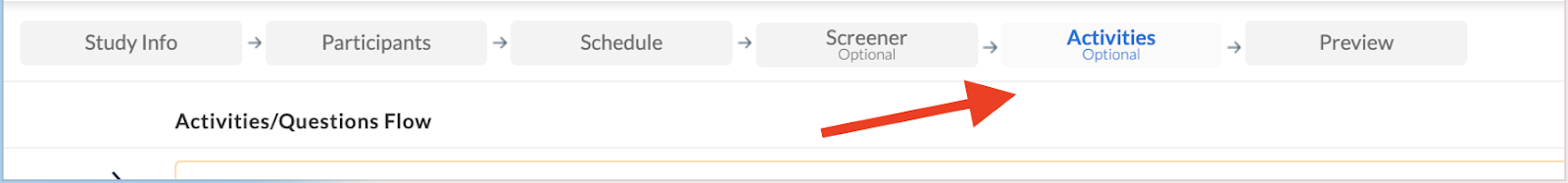

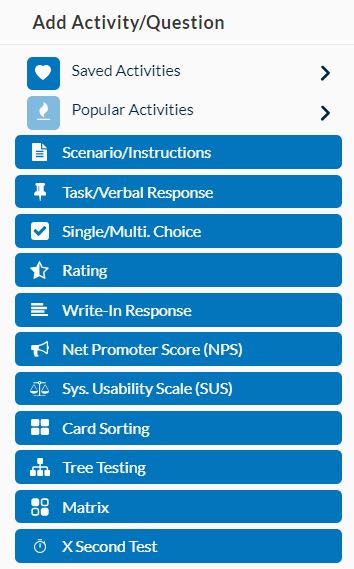

With Userlytics easy-to-use test builder, you can create custom UX tests for your own participants or participants from our global panel of over 1.6 participants. The Activities tab on your test builder allows you to choose from a variety of different task and question types for your usability test(s). Each task comes with a generic template that you can adjust and edit as you please. Strategically choosing tasks that will help draw the insights you are looking for is key to conducting a successful usability study.

Below, we go over 10 different types of tasks, and how they can help you achieve the UX insights you are looking for.

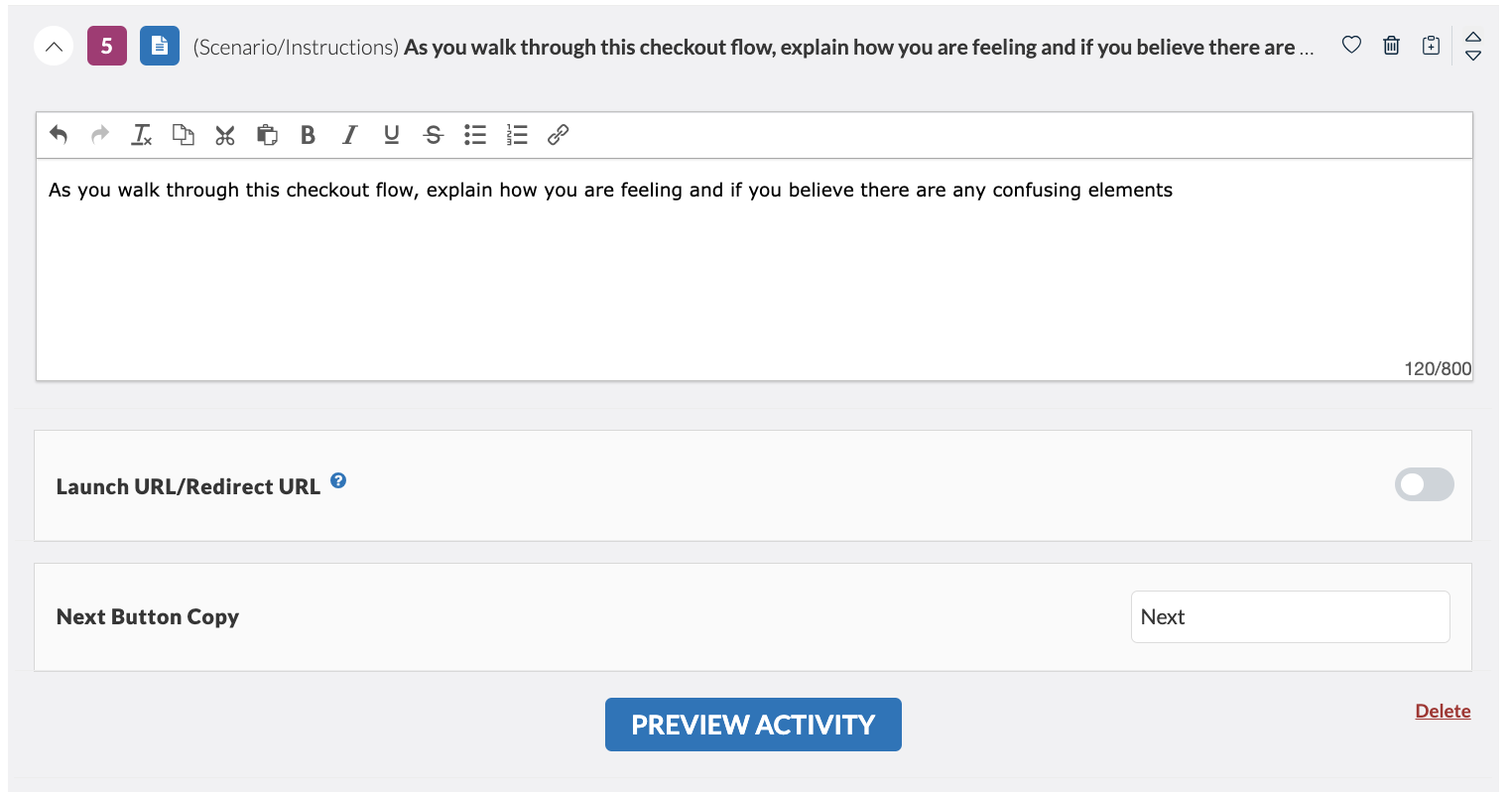

Scenario

The “Scenario” task sets the stage for your test by asking your participants to imagine they are doing something. For example, if the platform you plan to test is an e-commerce website for pet products, the scenario task may read something like: “Imagine you are browsing for dog food made from natural ingredients.” Your participant will better be able to provide their sentiments about your product with a well-written scenario task. In addition to setting the stage, the scenario task typically reminds your participants to speak out loud throughout the test and share their thoughts. This helps give you the best possible insights, and allows you to draw powerful conclusions about your product’s overall usability.

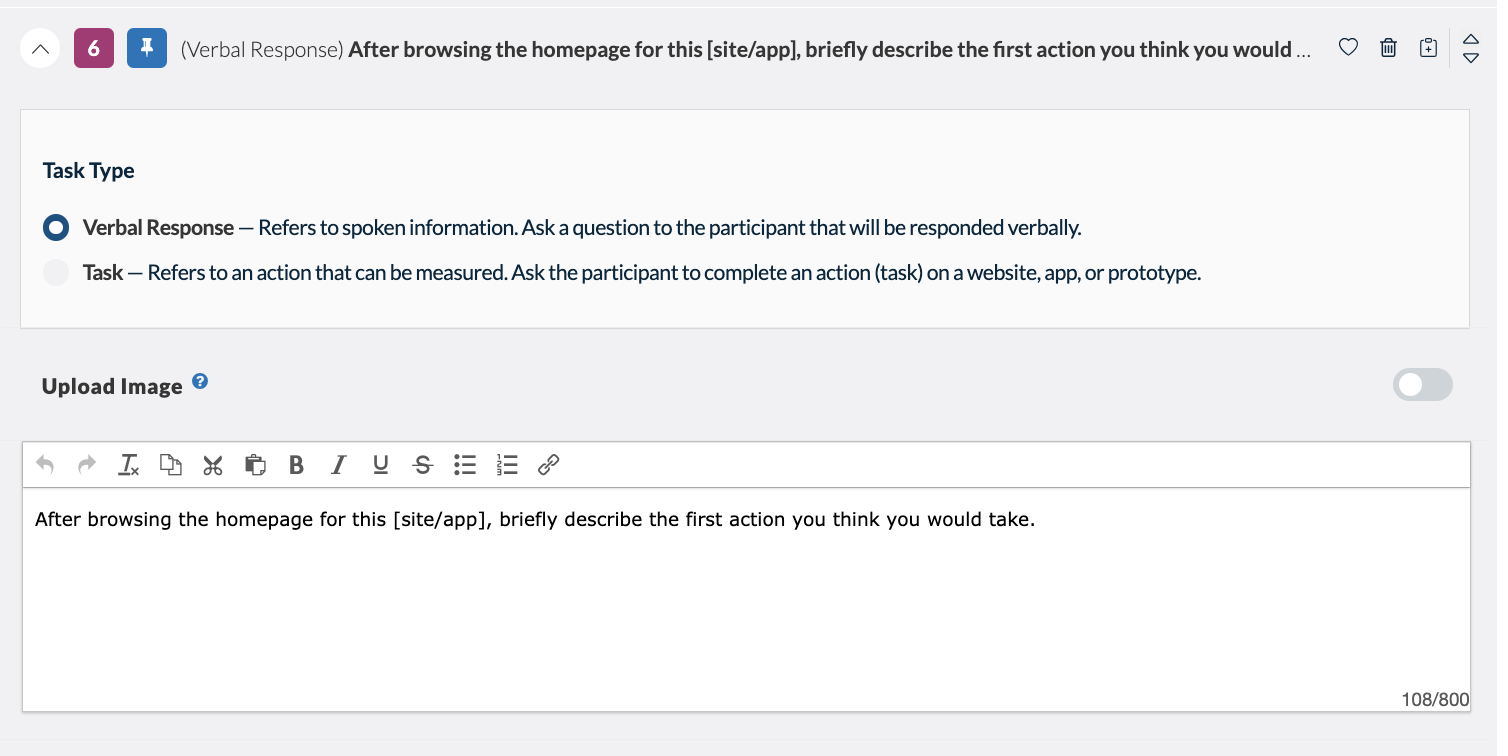

Task / Verbal Response

The “Verbal Response” task will direct your participant to answer a particular question out loud. For example, if at the start of your test your participants are directed to a website, the verbal response task might read “After browsing the homepage for this site, briefly describe the first action you think you would take.” This question type gives you a direct understanding of how your consumer thinks, and whether or not your platform is serving their needs.

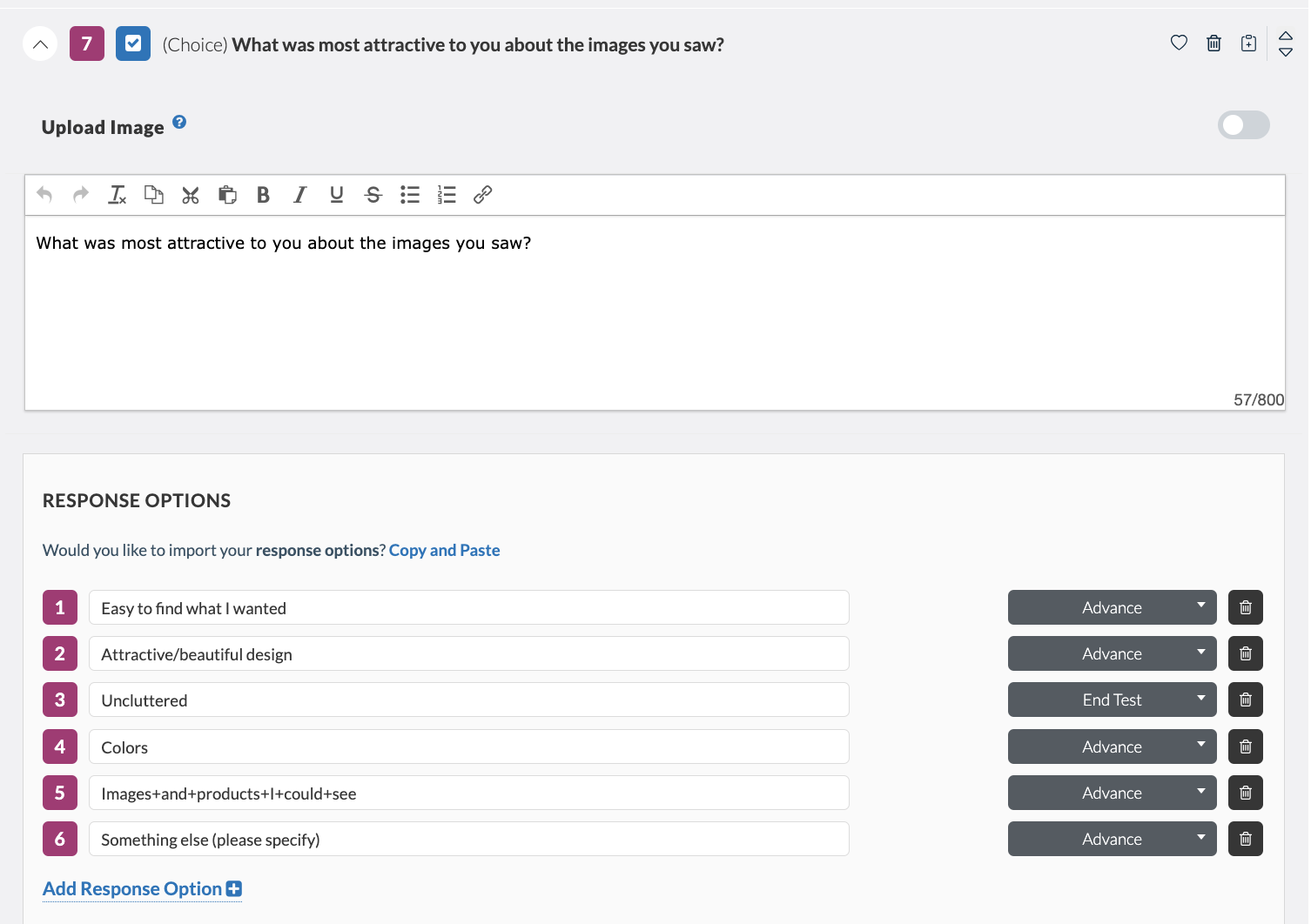

Single / Multiple Choice

The “Single / Multiple Choice” task gives you the option of creating questions with only one correct answer, or checkbox style questions where the participant can include more than one response.

Single-choice questions are effective when you are trying to decipher whether or not some aspect of your platform or prototype is clear to the consumer. There is only a single correct answer and the rest of the options are distractors. Participants must completely understand the content before they pick the only correct answer from the list of options. For single choice questions, you will only receive one question response per participant.

Multiple choice questions are effective when you are trying to get a better understanding of how the majority of your audience feels. Perhaps in your results, you see that two or three answer choices are being selected more than others. This may give you an idea of what’s working within your platform, and what needs improvement. For multiple choice questions, you will receive a span of outputs from participants ranging from one response to the number of answer choices you have specified. For example, if you create a multiple choice question with five possible answers, you may receive test results from participants that include one, two, three, four, or all five of those answer choices.

You will notice that here, you also have the option to use question logic, which would allow a tester to advance, or being disqualified from a test based on your test requirements.

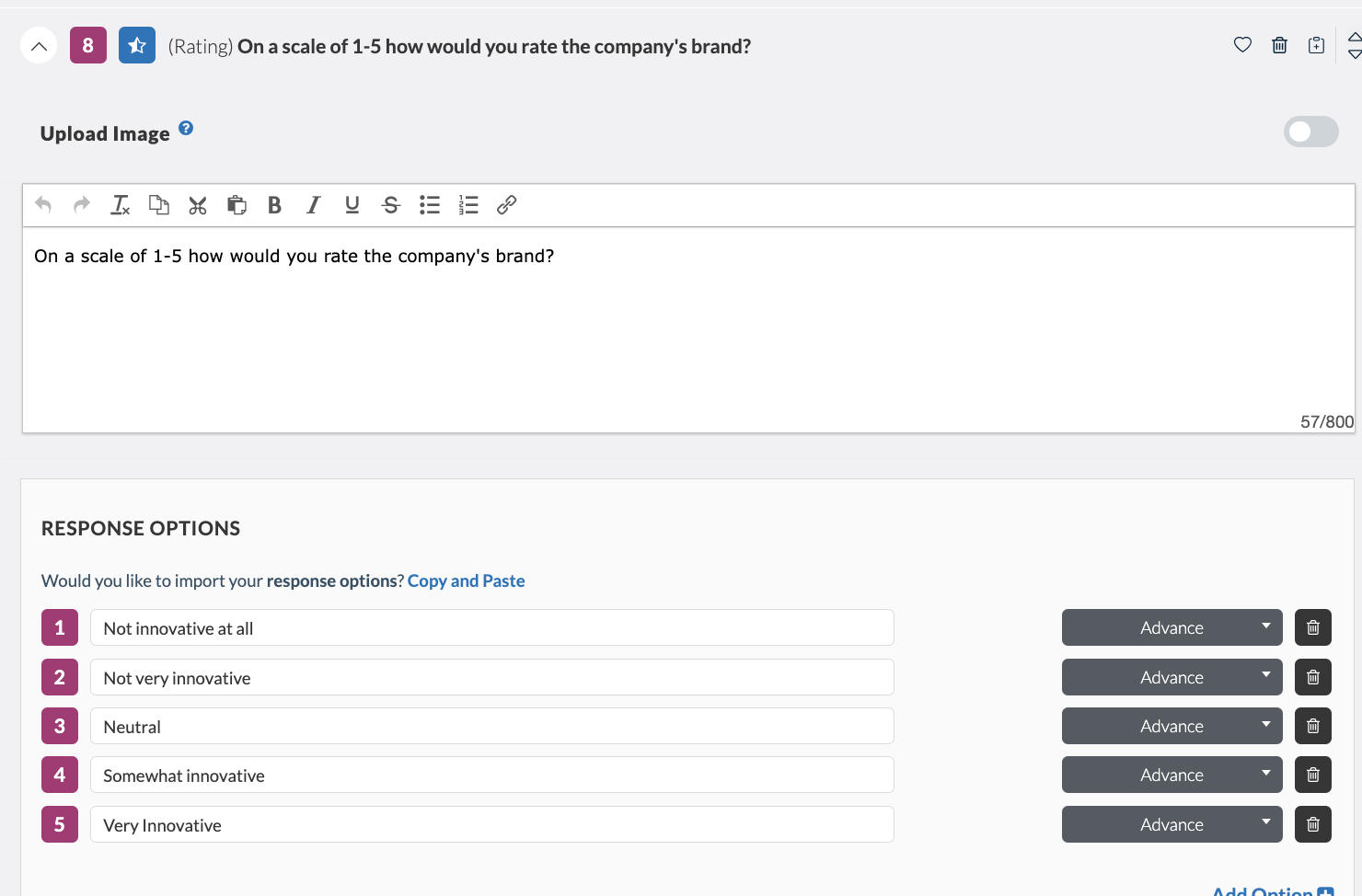

Rating

“Rating” questions use a scale to measure degrees of opinion among a particular group. We often measure attitudes, perceptions, beliefs, preferences, and self-reported behavior using rating-scale questions. The attitudinal data produced from rating-scale questions helps us understand how users perceive our product or service.

An example of a popular rating task is asking your participants how they perceived some aspect of your website, app or prototype on a scale, the lowest number being the worst and the highest number being the best.Rating questions can have as many answer choices and display values (numbers, words, etc.) as the test creator wants. In the case of there being five answer choices, for example, you will receive one response per participant between the numbers “1” and “5.”

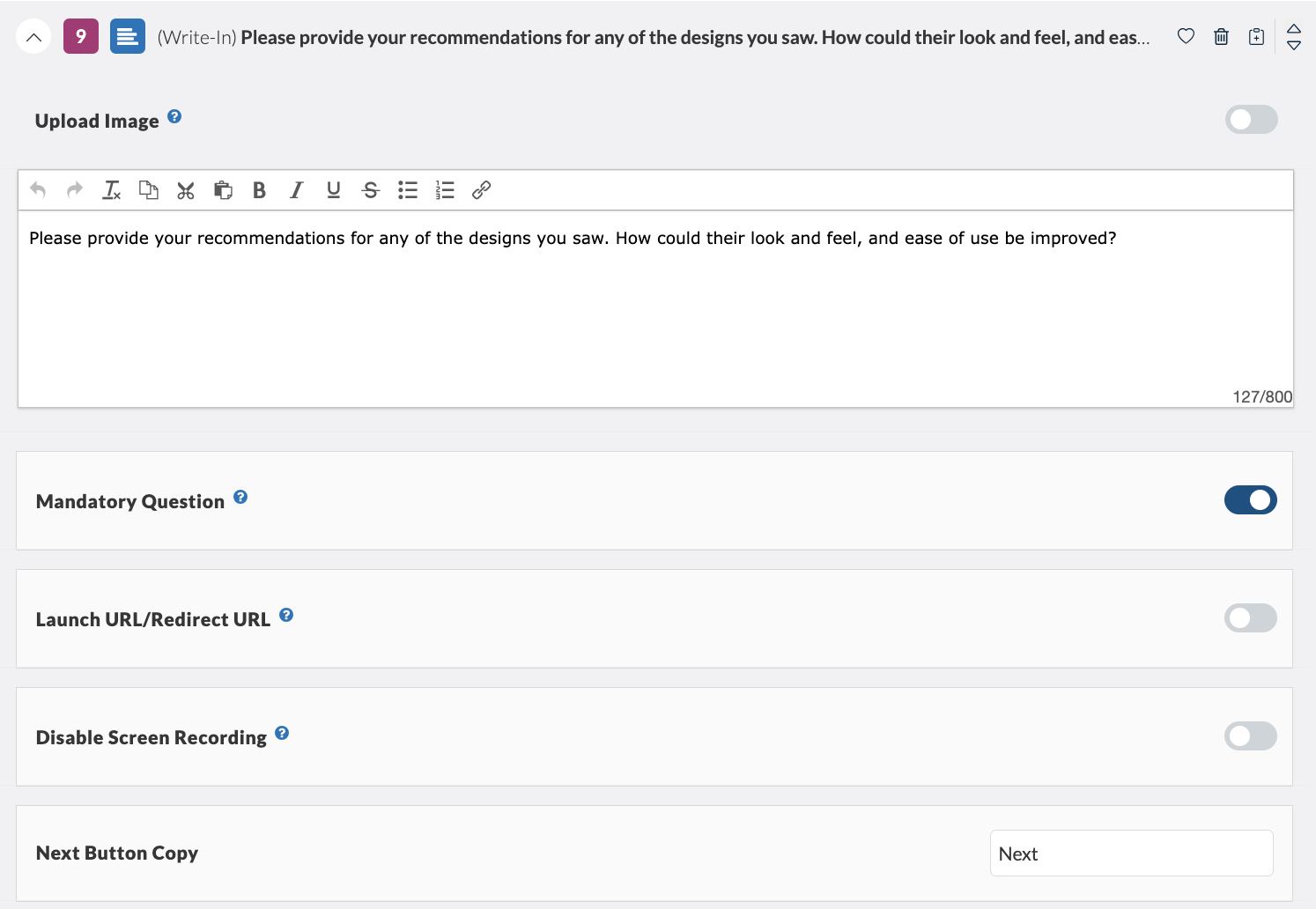

Write-in Response

“Write-in Response” tasks allow you to ask the participant an open-ended question and have them respond via written response. Some participants may feel more comfortable answering questions honestly via written response than via verbal prompts.

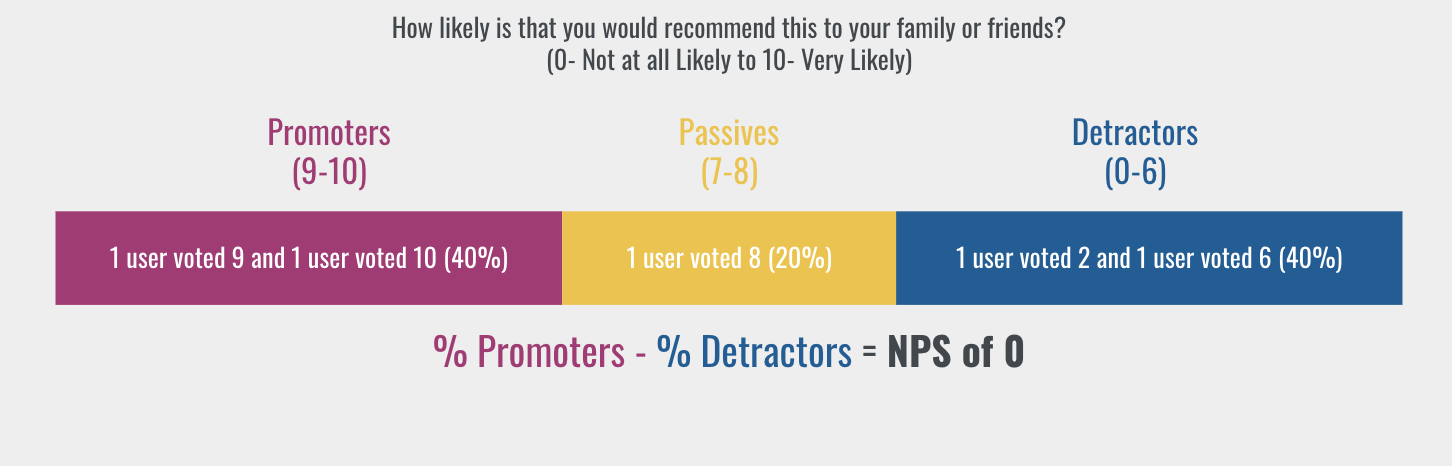

Net Promoter Score (NPS)

The “Net Promoter Score (NPS)” task calculates the percentage of customers rating their likelihood to recommend a company, a product, or a service to a friend or colleague. According to Netpromoter.com, you can calculate your NPS using the answer to a key question, using a 0-10 scale: How likely is it that you would recommend [brand] to a friend or colleague?

Respondents are grouped as follows:

- Promoters (score 9-10) are loyal enthusiasts who will keep buying and refer others, fueling growth.

- Passives (score 7-8) are satisfied but unenthusiastic customers who are vulnerable to competitive offerings.

- Detractors (score 0-6) are unhappy customers who can damage your brand and impede growth through negative word-of-mouth.

For this task, each participant’s answer is a number between 0 and 10. Subtracting the percentage of Detractors from the percentage of Promoters yields the Net Promoter Score, which can range from a low of -100 (if every customer is a Detractor) to a high of 100 (if every customer is a Promoter).

At one end of the spectrum, if when surveyed, all of the customers gave a score lower or equal to 6, this would lead to an NPS of -100. On the other end of the spectrum, if all of the customers were answering the question with a 9 or 10, then the total Net Promoter Score would be 100. The NPS can help you determine whether or not you correctly identified your customer’s needs in your prototype or platform.

System Usability Scale (SUS)

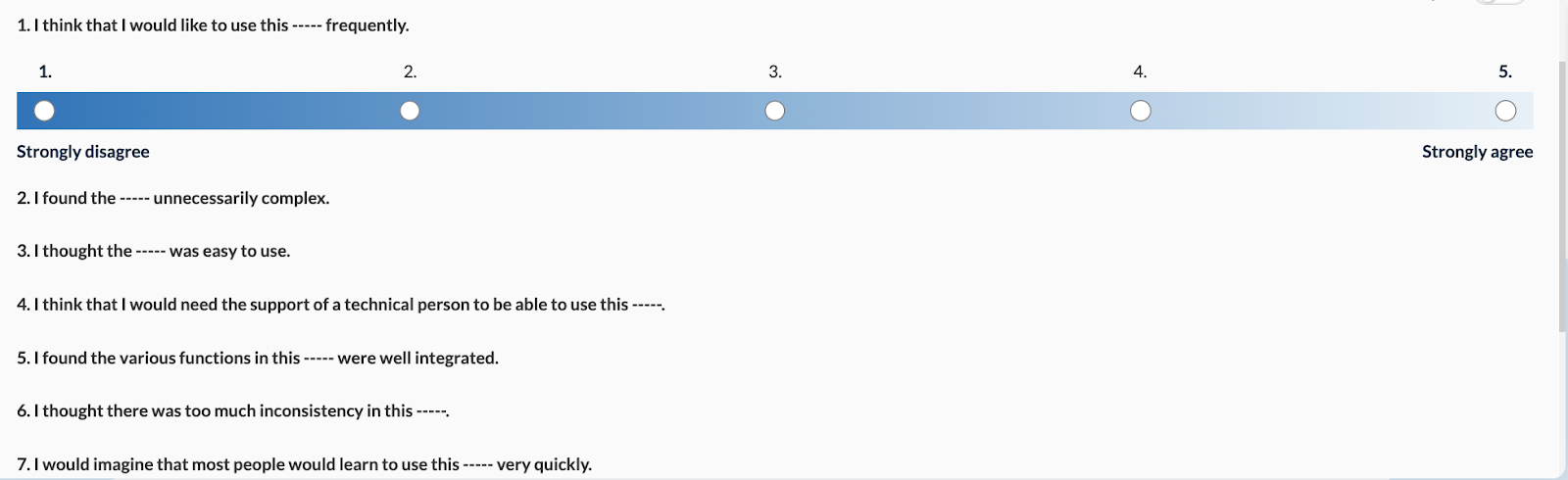

The “System Usability Scale (SUS)” task provides a quick, reliable tool for measuring product usability. It consists of a 10 item questionnaire with five response options for respondents; from Strongly agree to Strongly disagree. The SUS allows you to evaluate a wide variety of products and services, including hardware, software, mobile devices, websites and applications. Our platform automatically generates the SUS questionnaire, leaving a space for you to insert the type of asset your participants are testing.

The benefits of using SUS include that it:

- Is a very easy scale to administer to participants

- Can be used on small sample sizes with reliable results

- Is valid – it can effectively differentiate between usable and unusable systems

SUS questions have five answer options, “1” being the most negative response, and “5” being the most positive response. You will receive one response per participant between the numbers “1” and “5.”

Calculate the cumulative sum of your SUS responses using the following calculation:

Step 1: Convert the scale into number for each of the 10 questions

- Strongly Disagree: 1 point

- Disagree: 2 points

- Neutral: 3 points

- Agree: 4 points

- Strongly Agree: 5 points

Step 2: Calculate

- X = Sum of the points for all odd-numbered questions

- Y = 25 – Sum of the points for all even-numbered questions

- SUS Score = (X + Y) x 2.5

The rationale behind the calculation is very intuitive. The total score is 100 and each of the questions has a weight of 10 points.

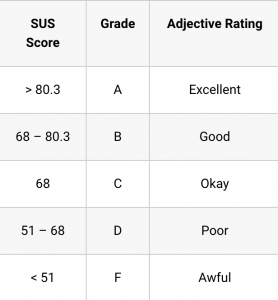

The average SUS score is 68. This simply means that a score of 68 will just put you at 50th percentile. Below is the general guideline on the interpretation of SUS score:

Your SUS task will appear to your participants as follows:

Card Sorting

Card sorting is a popular method for understanding the mental model of the user. Instead of organizing a website by your corporate structure, you base it on how the users think by having them sort items into categories.

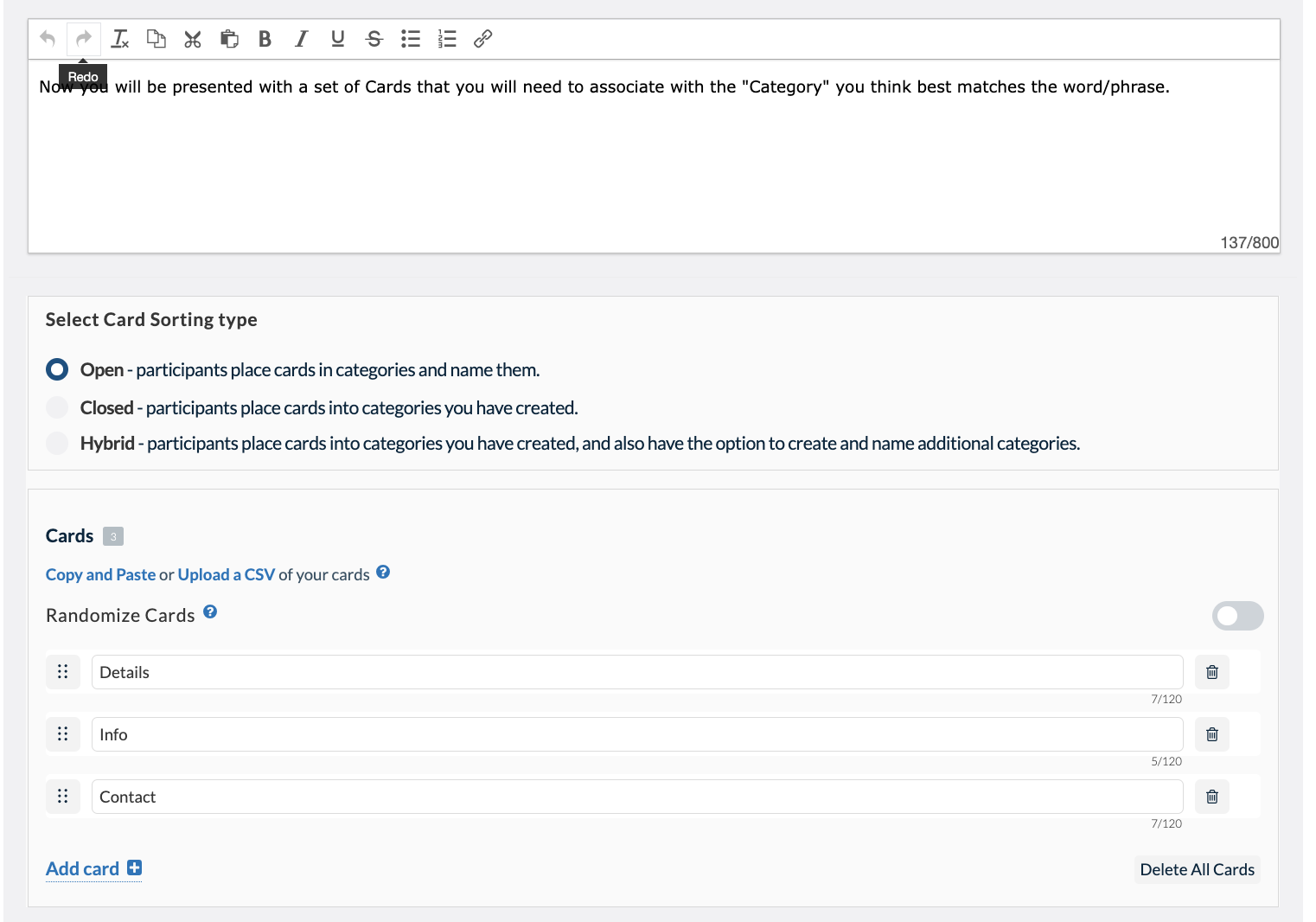

You have the option of creating three different types of card sorting tasks:

- Open Card Sort: Participants create and name their own categories

- Closed Card Sort: You predetermine the card sorting categories

- Hybrid Card Sort: You predetermine some categories and let participants create their own additional categories if they would like to

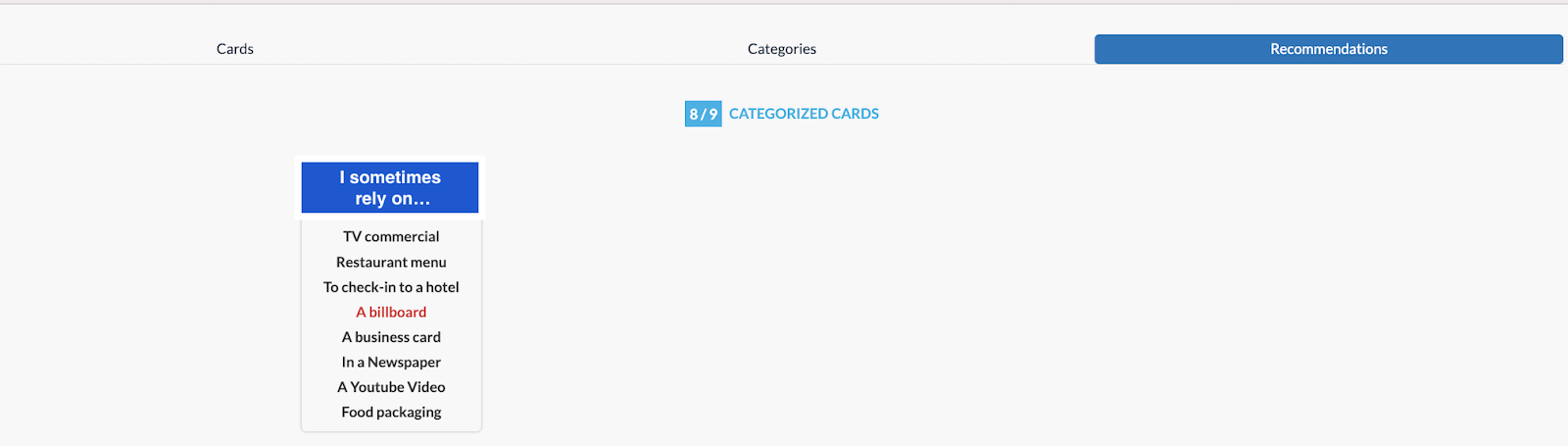

Your participants’ card sorting results will be available in the “metrics” portion of your Userlytics dashboard. Under your metrics, there will be three sections: Cards, Categories and Recommendations.

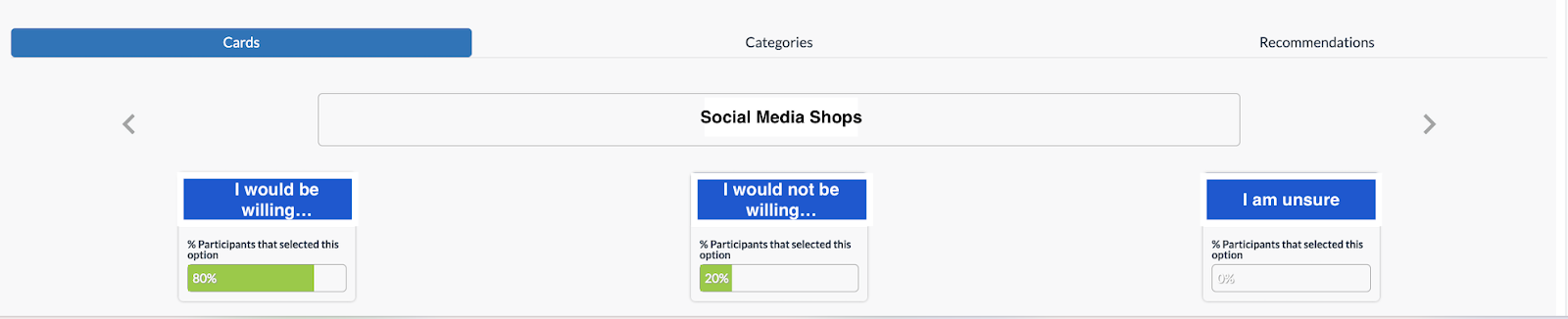

Card Metrics

Clicking “Cards” on the left-hand side will give you information on how your participants sorted each of your task Cards. You can click the left and right arrows to sort through each of your Cards and see what categories your participants placed them in.

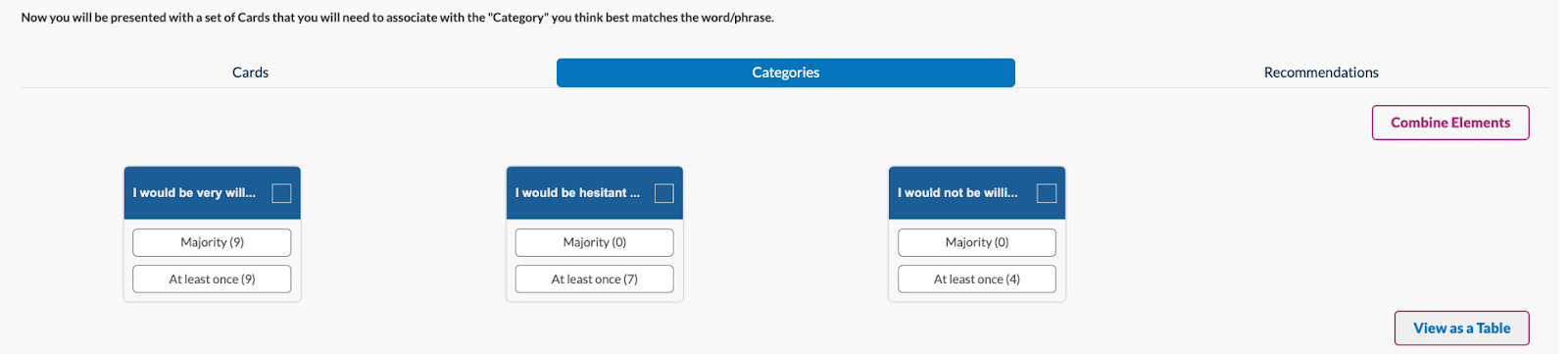

Categories Metrics

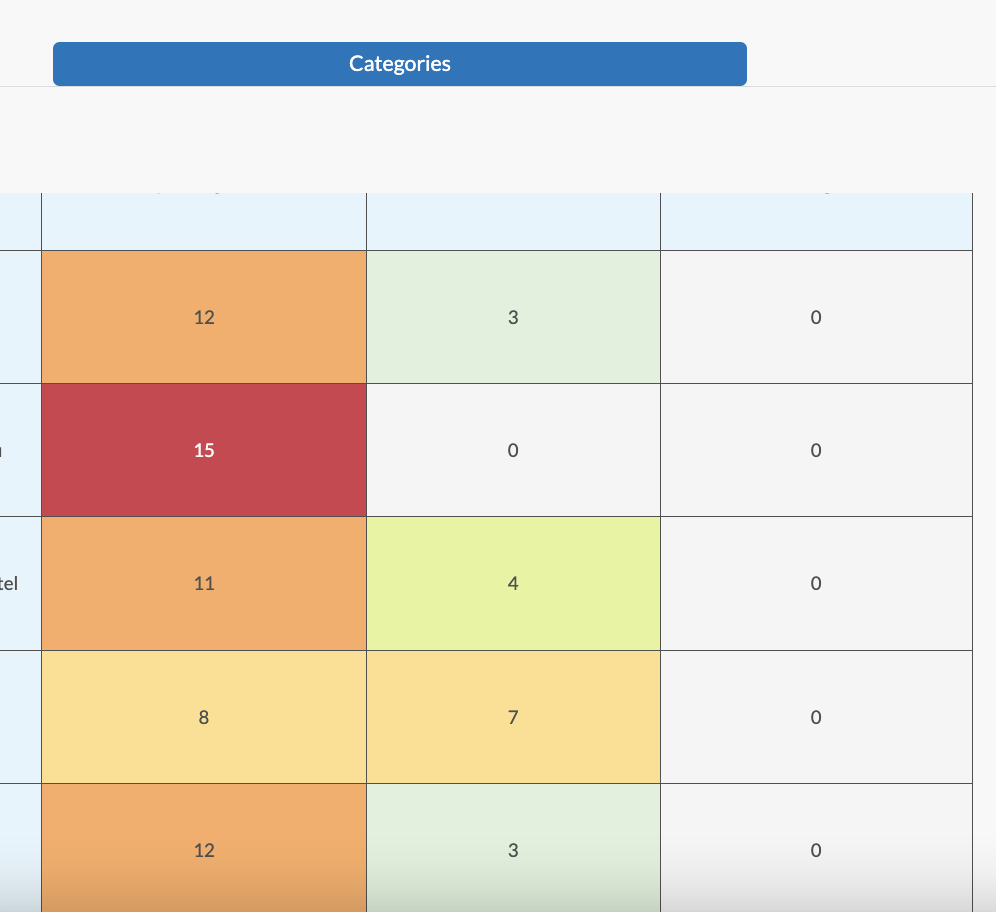

The middle section, “Categories,” gives you information about any Categories your participants created, the percentage of Cards your participants listed under each Category, and more. There are two ways to view this section. The first is “View as Cards,” which will let you know what Cards were placed in each Category by the majority of participants, and which Cards were placed in each Category at least once.

Clicking on any of these metrics will expand them and give you greater detail on the percentage of participants that placed specific Cards in each Category.

The second way to view metrics for the “Categories” section is “View as Table.” In this viewing mode, a table will appear showing the number of test participants that placed each Card within the given Categories. Any boxes that appear in red signify that all participants placed that Card within the same Category. The colors within the table appear lighter as fewer participants place those Cards within a specific Category. This table gives you a visual representation of how your test participants interpreted your digital asset’s informational structure.

Recommendations Metrics

The third metrics section on the right-hand side, “Recommendations,” gives you recommendations on Categories and Cards you should consider removing or revising. Our algorithm will create Warnings for specific Cards and Categories that may need to be removed or renamed in order to promote clarity and consistency within your brand asset. The elements that appear in the Warnings sections were placed by your participants in your test, but with minimal data to support them. These recommendations allow you to eliminate confusing or irrelevant aspects of your website, and to strengthen your website’s organization.

Tree Testing

A “Tree test” is just another name for a reverse card sort. A tree test evaluates a hierarchical category structure, or tree, by having users find the locations in the tree where specific tasks can be completed. Including a tree testing task within your usability test can help verify the validity of the information structure and findability of different products or themes on a site, mobile app or prototype.

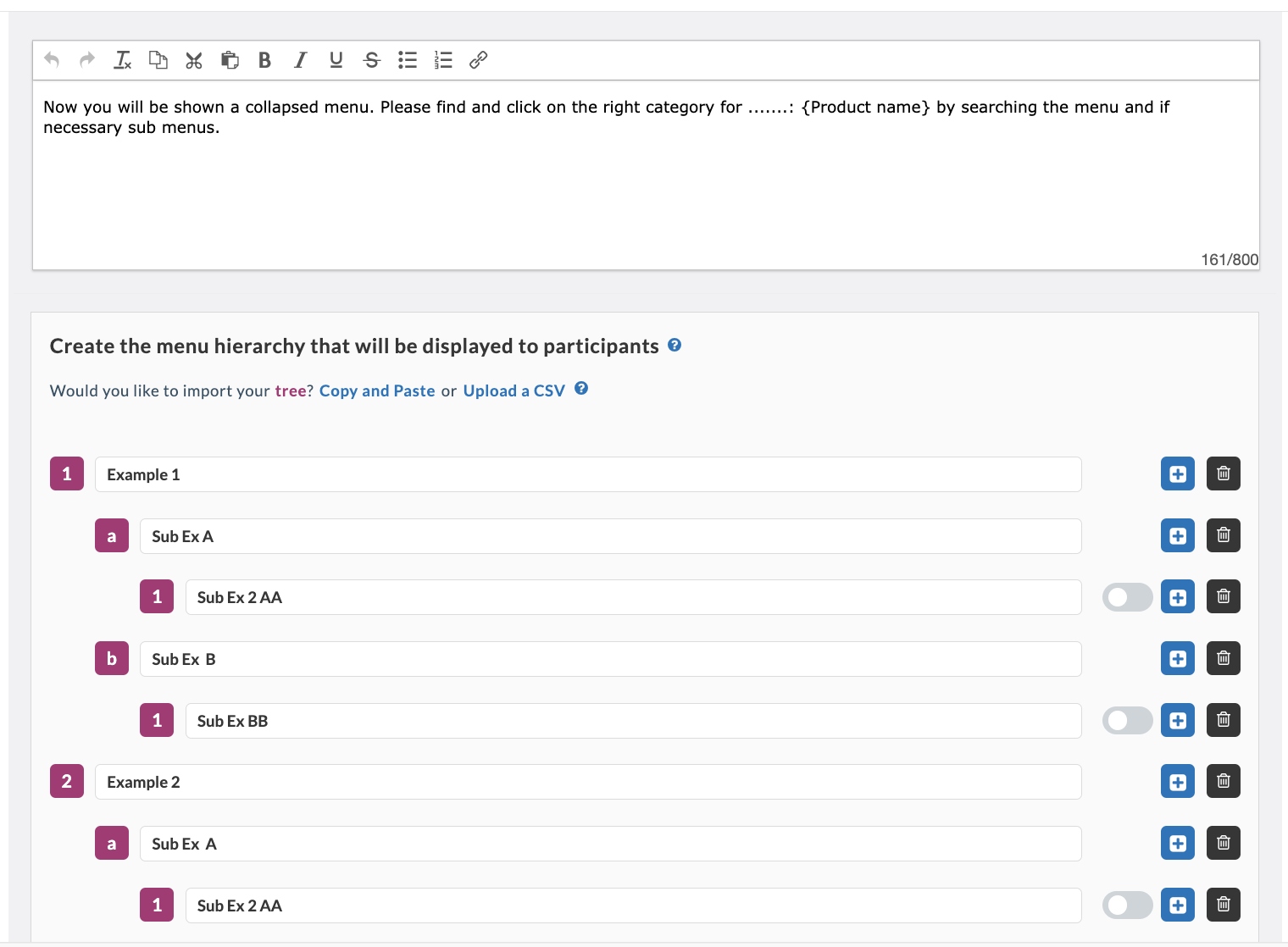

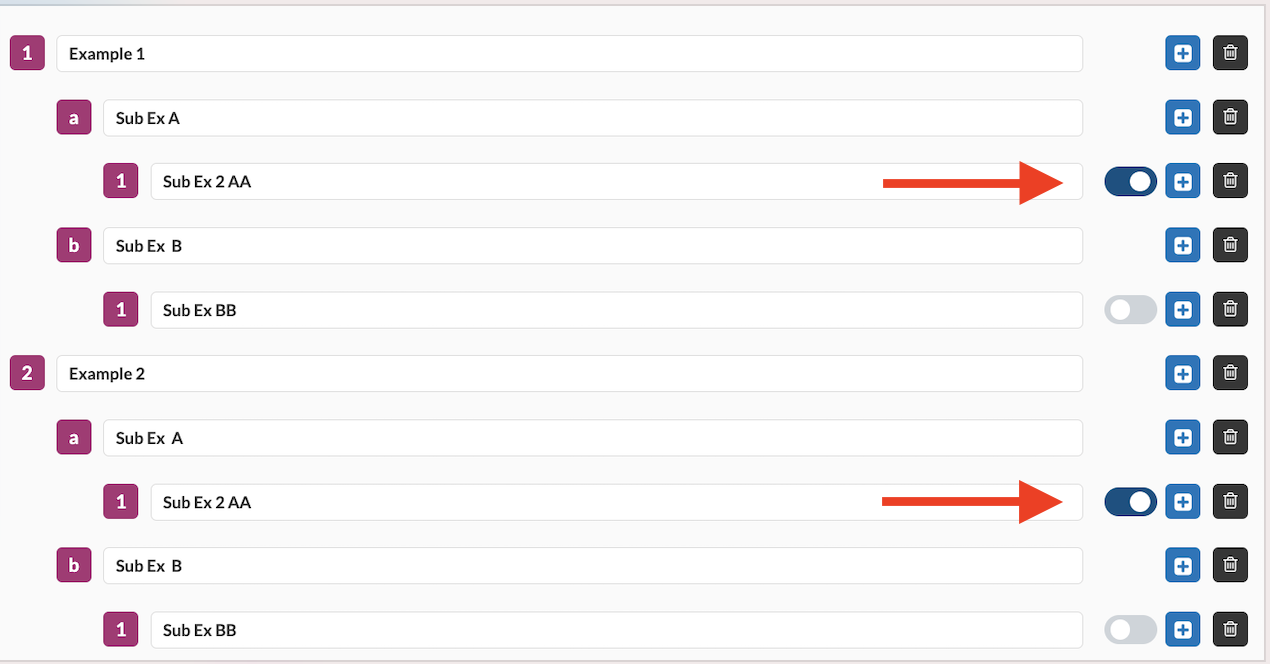

When building out your Tree Testing task, first, you will have to customize the text your testers will see. Here, you will define the product or theme your participants will be sorting into a specific category. Then, you will define your categories, or menu hierarchy. Here, you have the option of defining subcategories to get more and more specific. Your participants will be able to sort through these categories and choose where they believe the product you have specified fits best.

After naming your categories, click the toggle bar on the right-hand side to select the correct response to the task. This will allow you to view the success rate of your Tree Testing task after your participants complete the usability test.

Your participants’ tree testing results will be available in the “metrics” portion of your Userlytics dashboard. Under your metrics, there will be three sections: Option Selected, First Click and Other Info.

Option Selected

Clicking “Option Selected” on the left-hand side will show you the percent of participants who chose each answer choice to your Tree Testing question.

First Click

The middle section, “First Click,” gives you information about whether or not any of your participants clicked on one category option, and then switched it to another option before making a final decision. The pie chart shows the percentage of participants who exited from a path after entering that tree. The information here is helpful because it can indicate whether or not your participants hesitated before selecting a final category, indicating your categories may need some fine-tuning to be more clear and intuitive.

Other Info

The third section on the right-hand side, “Other Info” gives you data about the overall results of your Tree Testing task. Information given included the percentage of participants that located the correct path without any backtracking, the number of participants who selected the correct option on the tree, and the average time your participants spent on the task.

Depending on the results of your Tree Testing task, you may receive a red highlighted “WARNING” button. Clicking on the button will warn you if the success rate of your task is lower than the industry average. If so, that means that you may need to consider revising your categories for added clarity.

Remember to Preview Tasks!

After building out your test tasks, be sure to preview each one of them to ensure they are easy to understand, well-written, and work well with your test asset. You can choose to run a “Quick Preview,” which allows you to check the flow of tasks/questions through an interactive HTML interface without actually downloading the Userlytics Recorder or showing you your test asset. Or, you can run a “Recorder Preview,” which will use the Userlytics Recorder and simulate a real test without any real recording or uploading taking place.

Test Builder, Test Builder, Test Builder, Test Builder, Test Builder, Test Builder, Test Builder, Test Builder